After winning the 2023 edition of the Challenge organized by the AID (Defence Innovation Agency), the Friendly Hackers team from Thales stands out once again in 2024, thanks to particularly ingenious technology, a metamodel for detecting images generated by AI (deepfakes).

The Thales metamodel is built on an aggregation of models, each assigning an authenticity score to each image.

This content (images, videos and audio) artificially created by AI is increasingly used for disinformation purposes but also for manipulation and identity fraud.

MEUDON, France, November 22, 2024 -/African Media Agency (AMA)/- On the occasion of the European Cyber Week which is being held in Rennes from November 19 to 21, 2024, the central theme of which is that of artificial intelligence, Thales teams participated in the AID Challenge by distinguishing themselves at second place thanks to the development of a metamodel for detecting images generated by AI. At a time when disinformation is spreading to the media and all sectors of the economy, in light of the generalization of AI techniques, this tool aims to fight against image manipulation for different cases of use such as the fight against identity fraud.

AI-generated images are generated through the use of modern AI platforms (Midjourney, Dall-E, Firefly, etc.). Today, AI technologies have evolved so much that it is almost impossible for the naked eye to distinguish a real image from an AI-generated image. This also applies to video, even in real time. An AI-generated image can therefore constitute an open door for malicious attackers who can use it for identity theft and fraud. Some studies predict that within a few years, deepfakes could cause massive financial losses due to their use for identity theft and fraud. Gartner has estimated that in 2023, around 20% of cyberattacks could include deepfake content as part of disinformation or manipulation campaigns. Their report highlights the rise of deepfakes in financial fraud and advanced phishing attacks.

« The Thales metamodel for detecting deepfakes responds in particular to the problem of identity fraud and the morphing technique. The aggregation of several methods using neural networks, noise detection or even spatial frequencies will make it possible to better secure the growing number of solutions requiring identity verification by biometric recognition. This is a remarkable technological advance, resulting from the expertise of Thales AI researchers. » specifies Christophe Meyer, Senior AI Expert and Technical Director at cortAIx, Thales’ AI accelerator.

The Thales metamodel draws on machine learning techniques, decision trees, and evaluation of the strengths and weaknesses of each model in order to analyze the authenticity of an image. It thus combines different models, including:

- The CLIP (Contrastive Language–Image Pre-training) method which consists of linking images and text by learning to understand how an image and its textual description correspond. In other words, CLIP learns to associate visual elements (like a photo) with words that describe them. To detect deepfakes, CLIP can analyze images and evaluate their compatibility with descriptions in text format, thus identifying inconsistencies or visual anomalies.

- The DNF method which uses current image generation architectures (“diffusion” models) to detect them. Concretely, diffusion models are based on the estimation of noise to add to an image to create a “hallucination” which will create content from nothing. The estimation of this noise can also be used in the detection of images generated by AI.

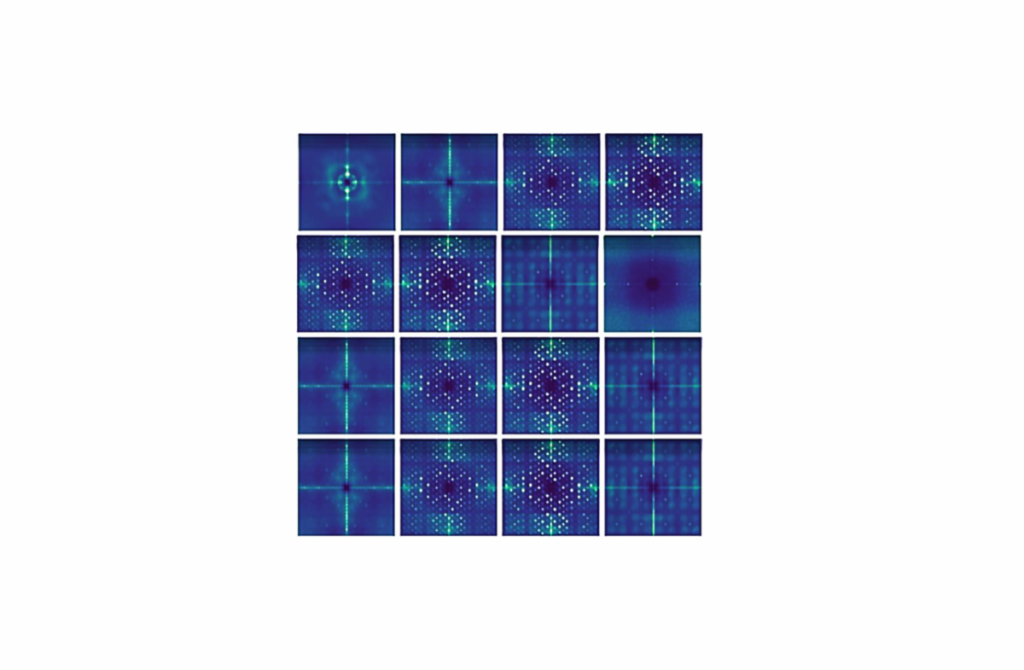

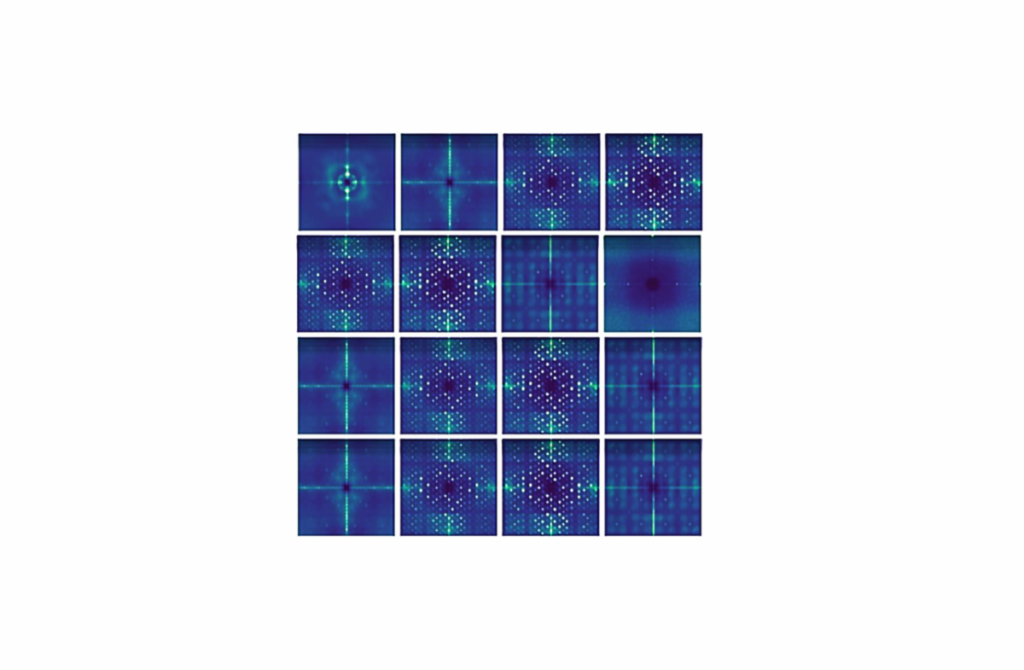

- The DCT (Discrete Cosine Transform) method is based on the analysis of the spatial frequencies of an image. By transforming the image from spatial space (pixels) to frequency space (like waves), DCT can detect subtle anomalies in the structure of the image, often invisible to the naked eye. They appear during the generation of deepfakes.

The Friendly Hackers team behind this invention is part of cortAIx, Thales’ AI accelerator, with more than 600 AI researchers and engineers, including 150 based on the Saclay plateau and working on critical systems. . The Group’s Friendly Hackers have developed a toolbox, the BattleBox, the objective of which is to facilitate the assessment of the robustness of systems integrating AI against attacks aimed at exploiting the intrinsic vulnerabilities of different data models. AI (including Large Language Models), such as adversary attacks or attacks aimed at extracting sensitive information. To deal with attacks, suitable countermeasures, such as unlearning, federated learning, model watermarking, model robustification are proposed.

The Group was a winner in 2023 as part of the CAID (Conference on Artificial Intelligence for Defense) challenge organized by the DGA, aimed at finding certain data used to train AI, including when it had been deleted from the system for preserve their confidentiality.

Distributed by African Media Agency (AMA) for Thales.

About Thales

Thales (Euronext Paris: HO) is a global leader in high technologies specializing in three business sectors: Defense & Security, Aeronautics & Space, and Cybersecurity & Digital Identity.

It develops products and solutions that contribute to a safer, more environmentally friendly and more inclusive world.

The Group invests nearly 4 billion euros per year in Research & Development, particularly in key areas of innovation such as AI, cybersecurity, quantum, cloud technologies and 6G.

Thales has nearly 81,000 employees in 68 countries. In 2023, the Group achieved a turnover of 18.4 billion euros.

CONTACT

Relations presse

[email protected]

Source : African Media Agency (AMA)

2024-11-22 13:01:00

#Thales #Friendly #Hackers #invent #metamodel #detecting #images #produced #deepfakes #

**Interview with Christophe Meyer, Senior AI Expert and Technical Director at Thales’ cortAIx**

**Interviewer:** Thank you for joining us today, Christophe. Congratulations on the second place finish at the AID Challenge with your innovative metamodel for detecting AI-generated images. Can you tell us more about what inspired this project?

**Christophe Meyer:** Thank you! The rise of AI-generated content poses significant risks, particularly in terms of disinformation and identity fraud. With deepfakes becoming more prevalent, we recognized the urgent need for robust tools to detect these manipulations. Our metamodel is a culmination of our research and expertise in AI, aimed at combating these growing threats.

**Interviewer:** It sounds like a crucial development in today’s digital landscape. How does the metamodel work in practice?

**Christophe Meyer:** The metamodel aggregates several detection methods to analyze the authenticity of an image. For instance, we utilize the CLIP method, which relates images to textual descriptions, checking for inconsistencies that could indicate manipulation. Similarly, our DNF method assesses noise patterns relevant to AI generation. the DCT method discerns subtle anomalies within the image’s structure that typically remain invisible to the eye.

**Interviewer:** It seems like a comprehensive approach. What specific challenges did your team face during the development of this technology?

**Christophe Meyer:** One of the main challenges was ensuring the metamodel could adapt to various methods of image generation, as AI technology is rapidly evolving. We had to create a system that not only detects current deepfakes but can also keep up with future developments. Our interdisciplinary team worked tirelessly to refine the model, combining insights from machine learning, computer vision, and image processing.

**Interviewer:** With deepfakes being used for malicious purposes in areas like identity theft and fraud, what implications do you foresee for industries relying on identity verification?

**Christophe Meyer:** The implications are significant. As sophisticated AI-generated content becomes more widespread, industries reliant on biometric recognition or digital identity verification must adopt advanced detection tools like our metamodel. This is especially true in sectors like finance, cybersecurity, and even social media, where the authenticity of images plays a pivotal role in trust and safety.

**Interviewer:** what’s next for Thales in terms of AI research and development?

**Christophe Meyer:** We plan to continue expanding our research at cortAIx, further enhancing the metamodel and exploring more applications of AI to ensure robust defenses against evolving threats. Additionally, we are committed to collaborating with industry partners and stakeholders to implement these solutions effectively, fostering a safer digital environment for everyone.

**Interviewer:** Thank you, Christophe, for sharing your insights. It’s exciting to see how technology continues to evolve to address contemporary challenges.

**Christophe Meyer:** Thank you for having me! It’s a pleasure to discuss these critical advancements.